More than fifty people are huddled around a miniature soccer field.

In the middle of the field, two teams of white, toddler-sized humanoid robots are playing a game. This is Penn’s robot soccer team – the UPennalizers – versus the Australian team rUNSWift at the RoboCup International Quarterfinals in Mexico City.

With a minute left on the clock, rUNSWift is leading 8-0. At this point the Penn guys are pushing to make at least a single goal.

Junior Dickens He, one of the human members of the UPennalizers, has been on the edge of his seat since half time. For Dickens and his colleagues—ten Penn grads and undergrads who have spent hundreds of hours programming the two–foot–tall bots, teaching them to see, to walk, to kick—this is it. This is the moment.

“Go! Go! Go!” Dickens is shouting. Pockets, one of the Penn bots, manages to steal the ball and kicks it toward his teammate, Tink. The pass fails, but the ball makes it into the rUNSWift goal box. “AHHH!” Dickens tugs at his hair.

The rUNSWift defenders jump into action, but they get a bit confused. One of them ends up kicking the ball closer to his own goal post, but the ball teeters on the goal alone. “Ohhh!” Dickens shouts. So. Close.

There’s just a few seconds left on the clock and the rUNSWift goalie, who is standing right next to the ball, can’t see it because his shoulder pad is blocking his camera. Pockets ambles over, but then he loses track of the ball because the goalie is blocking his view.

“Five. Four. Three. Two.” At this point everyone is laughing. “ONE!”

That game was last July.

The UPennalizers have been training ever since. With the US Open coming up this Saturday, they’re looking leaner and meaner than ever before.

***

RoboCup is an international competition with one lofty goal: to develop a humanoid robot soccer team that can compete in the World Cup against real, human players by 2050. The robot players are completely autonomous. There’s no one standing in the sidelines with a remote control. Once the robots are on the field, they have to fend for themselves.

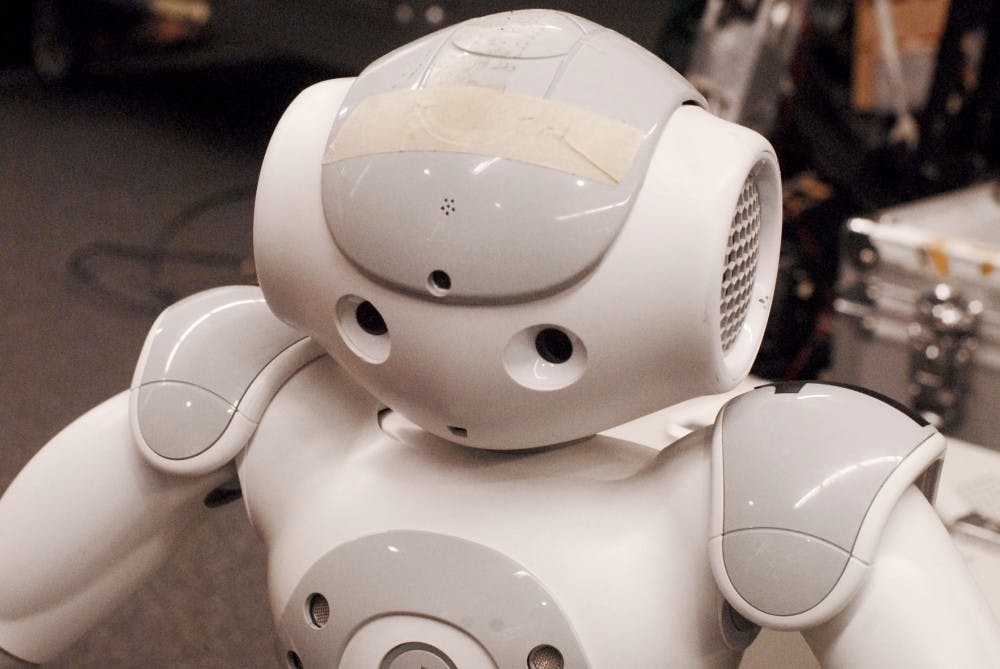

The human members of the Penn’s RoboCup team are undergraduate and graduate researchers at the GRASP robotics lab. Penn competes in two robosoccer leagues. The UPennalizers (mostly undergrads) participate in the Standard Platform league. Every team in this league uses the same hardware: little white NAO robots developed by the French company Aldebaran. On the other hand, Team DARwin (mostly grad students) participates in the Kid–Sized Humanoid league. They have to develop their own hardware, meaning they have to build their robots from scratch. DARwin is the name of the robot they use—it stands for Dynamic Anthropomorphic Robot with Intelligence, and it was developed in collaboration with Virginia Tech, Purdue University and the Korean robot manufacturer ROBOTIS. (And while the UPennalizers didn’t have their best run, Team DARwin actually won first place in Mexico City.)

But hardware is only one part of the equation. Without the right software, these robots are just empty shells, inanimate bodies. The students, with the help of Electrical and Systems Engineering Professor Dan Lee, have developed a set of algorithms that tell the robots how to behave: how to see, walk, kick and play soccer. They load these algorithms onto the robots’ Central Processing Units (their brains), and bring them alive.

The problem is, it’s not that easy getting a robot to play soccer. Actually, it’s really, really difficult.

“We don’t understand the brain,” says Dan Lee. Our optic nerves transmit thousands of electrical signals to our cranium, and “from this somehow we are reasoning about other people and other objects in our environment.” Roboticists who work on artificial intelligence are trying to recreate parts of that process in machines.

Even really good AI can easily fail. Before the international competition last year, the UPennalizers had come up with some tricky new ways to beat the competition. But their robots depended on WiFi to communicate with each other and figure out where they were. And as it happened, the WiFi signal at the Mexico City stadium wasn’t very good.

Ironically, Lee says, “Robots are good at doing the things that humans have to go to school for.” Way back in 1997, IBM’s Deep Blue famously beat world champion Garry Kasparov at chess. And a couple years ago, the same company’s robot Watson won a game of Jeopardy against expert human players.

“The tasks they’re not good at are the things we take for granted,” Lee says. “Just being able to run and kick a ball—that’s really hard for a robot.”

***

Back at Penn’s General Robotics, Automation, Sensing and Perception (GRASP) Lab, it’s the Thursday of Spring Fling, but the RoboCup team is busy prepping for the US Open. Freshmen Sarah Deen and Alan Aquino are trying to smooth out a kink in Pockets’ walk algorithm. It looks like a hardware problem. “The connection between the head and the body is not tight enough,” Dickens says. Pockets shakes and spasms. “Yeah, I’m going to kill Pockets,” Alan says. He lifts Pockets up and pushes the power button on the robot’s chest with his thumbs. “Man, it really is like suffocating someone. It’s so creepy!”

Meanwhile, Tatenda Mushonga, a mechanical engineering junior who joined the team last semester, has his laptop hooked up to one of the NAO’s brains via Ethernet cable. The problem is, they’re having a bit of trouble connecting. Masters student Yida Zhang is trying to help. “I have no idea what is going on with your system right now,” he says. “I don’t know man—when the robot rejects you…”

Dickens comes over. “What’s wrong?”

“Robots don’t like him!” Yida says. Tatenda starts cracking up.

One of the reasons that roboticists are investing in humanoid robots is that, according to Aditya Sreekumar, the Robotics masters student who’s leading the DARwin team this year, “People find it easier to interact with robots that have human features. A lot of our teammates have robots that they like more,” he says. “Steve really likes Betty. My favorite is Linus.”

All the robots, including the NAOs and the DARwins, have names. Additionally, they’re all pretty damn cute. The NAOs kind of look like monochrome, baby versions of Megaman. The dark gray DARwins are decidedly dopier. They’re shorter than the NAOs, with tinier heads and huge circular eyes. When they walk they look like they’re slouching over.

But there’s a bigger, more important reason that humanoids are important: our world is built for humans, and if we want to incorporate robots into our daily lives, humanoids are the way to go. “You can’t expect a wheeled robot to climb up a ladder, or climb up stairs,” Aditya says.

After switching out Pocket for another bot named Rufio, Sarah and Alan are having success. Rufio stands up, and his eyes light up green. He immediately spots the little red ball lying near him, then starts walking toward it. “Attack,” he says in a tiny, mechanical voice (this behavior, team members note, is not as cute when it’s late at night).

“Rufio is always the best, right?” Dickens says. “Good old Rufio!” Alan agrees.

“Alright guys!” Dickens addresses the team. They plan for their next practice. “How about Sunday?” he says. “I don’t know how much you guys do Spring Fling,” he says. Everyone starts laughing. “But I don’t want to have anyone here on Saturday. I don’t want to be the asshole.”

***

A week before the US Open, Robotics PhD Steve McGill, a many-time RoboCup veteran, is passing his torch down to the DARwin newbies. He’s not as involved with RoboCup anymore, but he still offers the team a lot of help and advice. “Each team member has got to have a role,” Steve coaches them. “You need one guy at the laptop, one guy has to be the handler.” Steve turns to Aditya Sreekumar, who’ll be leading Team DARwin this year, and grabs him by the shoulders. “You. You just try to relax and have fun.”

Now it’s Aditya’s turn to address everyone, “One thing is—during the match, don’t get tensed for any reason. Batteries die. Things go wrong. It’ll be ok.” Everyone’s got to stay focused.

But the US Open isn’t as high pressure as the international competition. And more than anything, both competitions serve as a learning experience. At Penn, says Lee, “one of our objectives is to use this as a platform for teaching.” RoboCup is a way to introduce the big, core problems in robotics to undergrads. Before Lee joined the Penn faculty in 2001, there was a small RoboCup team, but it was limited to graduate students. Lee wanted to get younger students involved.

To get the team started, Lee wrote some of the core code for the robots, but he left it up to the students to perfect and innovate. “To have a freshman come in and do this from scratch is kind of impossible,” Lee says.

Mushonga sees RoboCup as a way to get a taste for the field of robotics. “It’s something I’ve been considering going into,” he says. “So far I seem to like it.”

Even Aditya, who now leads the team, says, “RoboCup was my first introduction to Linux programming.” Now Aditya is working on a bunch of other, bigger robotics projects at the GRASP lab.

Even before they learn to change and improve the robots’ software, students can learn a lot from reading and understanding the existing codebase. “Everyday I learn something new about how the code works,” says Samarth Brahmbhatt, a robotics masters student who happens to do this kind of stuff regularly for class. “Personally, I feel like I know just the tip of the iceberg.” It takes a ton of code to get a robot acting and thinking like a soccer star.

Really, RoboCup was started as a challenge for the research community. During a game, soccer–bots have to face three basic challenges: locomotion, object–recognition and localization. In other words, they have to be able to walk and kick straight, they have to be able see stuff, and they have to figure out where on the field they are. “When you look at robotics in general,” Aditya says, “These are the three biggest challenges.”

Now that they are well established, the UPennalizers have even made their code open source. Any engineering department that wants to start its own RoboCup team can find Penn’s code base online and use it as a starting point. Especially considering that many of their competitor teams consist exclusively of robotics PhD students, Penn’s done really well at the competitions. But the emphasis is always on learning.

Will they get good enough to play against human pros by 2050? “2050 is a really long time from now,” Steve says, smiling. “So sure. Shoot for the moon.”

Aditya’s more optimistic: “Yeah, probably.”

There are a lot of limitations to overcome first. There needs to be a battery powerful enough to last through a game (the batteries on the DARwins rarely last more than 10 minutes), and there needs to be a CPU powerful enough to process very sophisticated algorithms, but small enough to fit inside the bot. If it happens, Lee says, it’ll be transformative. Not that we’ll go around replacing human soccer players with robots. But any bot that’s good at playing soccer could probably be good at doing other, more badass stuff as well.

Lee’s students in the GRASP lab are currently working on a US Navy–funded project to build autonomous robots that can fight fires on ships. “Robots are a very good replacement for activities that are dangerous for humans,” Aditya says.

***

It’s NAOs versus DARwins in Levine Hall’s room 502. Rufio versus Linus.

Rufio starts off bewilderedly looking around the field—but then. “Oh! He sees the ball! He sees it,” Dickens says. Rufio starts charging. On the DARwin side, Linus is on it as well.

“Kick it, kick it!” Aditya cheers Linus on. Too late. Rufio kicks it toward the goal, and Linus, who is half a foot shorter and looks like Rufio’s baby brother, falls over backward. Then, he gets a bit confused and starts kicking toward his own goal. “No, no no!”

Aditya sets the ball center field, and the bots square off again. Rufio walks over to his goal post. “He’s trying to find a star position on the court,” Dickens says. “Look at that—what good localization!”

Rufio spots the ball, he charges, aaaaaaaaand he kicks!

From last year's US OPen:

http://www.youtube.com/watch?v=6l3bnOg4Twg

From the Mexico 2012 Tournament:http://www.youtube.com/watch?v=lg4FWN4vx0c

At the GRASP Lab:

http://www.youtube.com/watch?v=qaGI4oRqcDs

Penn's video:http://www.youtube.com/watch?v=HQHUw5SzYok